Subspace - 3h Has Arrived!

Finally 3h has arrived! You should definitely read the entire forum post here:

https://forum.subspace.network/t/migrating-from-gemini-3g-to-gemini-3h/2428

There are quite a few big changes, especially with how the commands run that make switching to the new chain not plug and play like before. I exclusively use Docker, and if you are on Linux, I really really recommend running Docker as well. I’ll be putting out a guide for new Farmers on running Docker this week. For those that are already running Docker, and more specifically have followed my guide, this should get you to 3h.

We need to do the following things to get running on 3h:

Stop and Remove Containers

Wipe Node Data

Wipe Farm Data

Update Stack File

Run

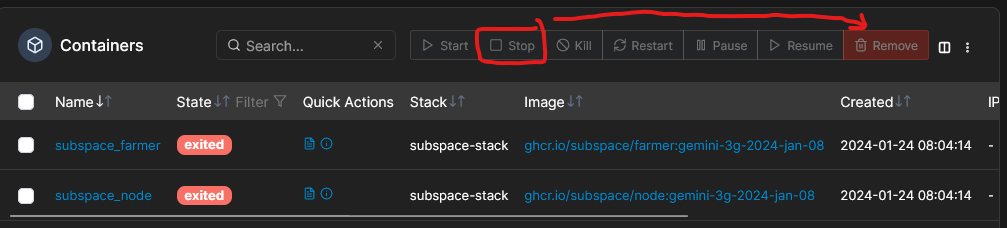

First, stop your node + farmer in Portainer and then remove the containers. You don’t need them anymore.

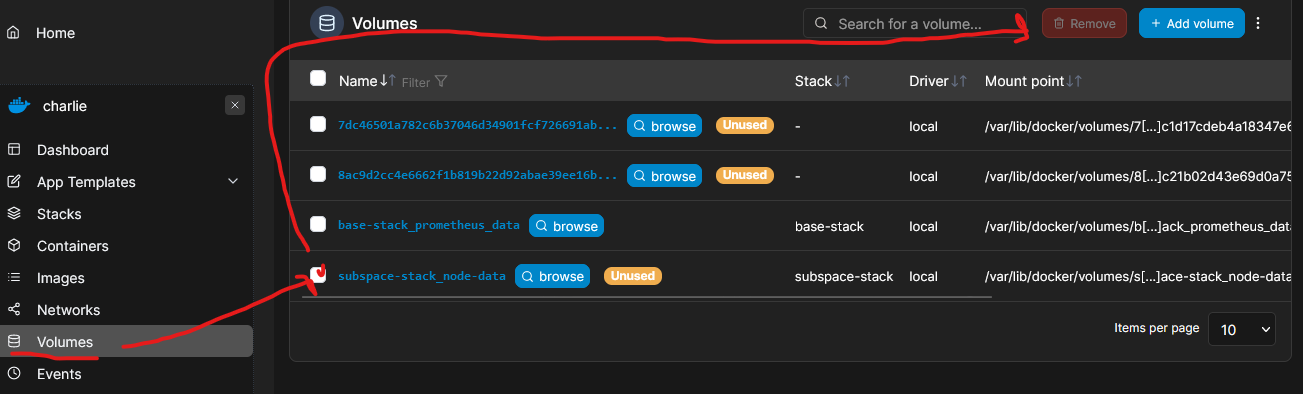

Next we need to delete the node volume. So click on “Volumes”, select the volume used by your node, and then click “Remove”.

Now go into the terminal and remove all the data from your drives. Make sure to be careful that you are deleting the right files!

sudo rm -r /path/to/you/files/*Let’s get back in to Portainer and update the stack file. I found it easier to start fresh instead of just updating the existing stack for each change. Here is what the new stack file should look like:

version: "3.7"

services:

node:

container_name: subspace_node

image: ghcr.io/subspace/node:gemini-3h-2024-jan-31-2

volumes:

- node-data:/var/subspace:rw

ports:

- "0.0.0.0:30333:30333/udp"

- "0.0.0.0:30333:30333/tcp"

- "0.0.0.0:30433:30433/udp"

- "0.0.0.0:30433:30433/tcp"

restart: unless-stopped

command:

[

"run",

"--chain", "gemini-3h",

"--base-path", "/var/subspace",

"--blocks-pruning", "256",

"--state-pruning", "archive-canonical",

"--listen-on", "/ip4/0.0.0.0/tcp/30333",

"--dsn-listen-on", "/ip4/0.0.0.0/udp/30433/quic-v1",

"--dsn-listen-on", "/ip4/0.0.0.0/tcp/30433",

"--rpc-cors", "all",

"--rpc-methods", "unsafe",

"--rpc-listen-on", "0.0.0.0:9944",

"--farmer",

"--name", "hhw-bravo"

]

healthcheck:

timeout: 5s

interval: 30s

retries: 60

networks:

spacenet:

ipv4_address: 172.18.0.201

farmer:

container_name: subspace_farmer

depends_on:

node:

condition: service_healthy

image: ghcr.io/subspace/farmer:gemini-3h-2024-jan-31-2

volumes:

- /media/subspace/subspace01:/subspace01:rw

- /media/subspace/subspace02:/subspace02:rw

- /media/subspace/subspace03:/subspace03:rw

- /media/subspace/subspace04:/subspace04:rw

ports:

- "0.0.0.0:30533:30533/udp"

- "0.0.0.0:30533:30533/tcp"

restart: unless-stopped

command:

[

"farm",

"--node-rpc-url", "ws://node:9944",

"--listen-on", "/ip4/0.0.0.0/udp/30533/quic-v1",

"--listen-on", "/ip4/0.0.0.0/tcp/30533",

"--reward-address", "st9uEvR9ZqnovgwmZ5s7rWAh2ACNZzBdVsBNHdjmqBUgXHS9B",

"path=/subspace01,size=3900G",

"path=/subspace02,size=3900G",

"path=/subspace03,size=3900G",

"path=/subspace04,size=3900G",

"--farm-during-initial-plotting", "false",

"--sector-encoding-concurrency", "4",

"--sector-downloading-concurrency", "5"

]

networks:

spacenet:

ipv4_address: 172.18.0.202

volumes:

node-data:

networks:

spacenet:

driver: bridge

ipam:

driver: default

config:

- subnet: 172.18.0.0/16

gateway: 172.18.0.1Keep in mind that I have mine set up for the following:

I have 4 disks, so I set up my encoding to 4 and downloading to 5 in my farmer service.

Update with your own reward address

Update with your own ‘—name’ for the node

I have set farm during initial plotting to false - this is not needed until 3h is incentivized, and you may see errors if you keep it as true.

Everything else is pretty much default. As of right now I am getting plenty of peers but no blocks. This is likely due to being super early, so by the time you are doing this your experience may be different.

Happy plotting!